Simplified Tag set (5.2.3-5.2.6)

Using simplified tag set:

>>> from nltk.corpus import brown >>> brown_news_tagged = brown.tagged_words(categories='news', simplify_tags=True) >>> tag_fd = nltk.FreqDist(tag for (word, tag) in brown_news_tagged) >>> tag_fd.keys() ['N', 'DET', 'P', 'NP', 'V', 'ADJ', ',', '.', 'CNJ', 'PRO', 'ADV', 'VD', 'NUM', 'VN', 'VG', 'TO', 'WH', 'MOD', '``', "''", 'VBZ', '', '*', ')', '(', 'EX', ':', 'FW', "'", 'UH', 'VB+PPO']

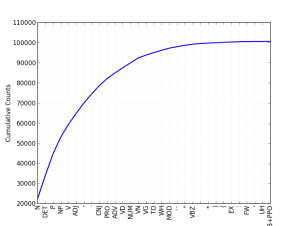

Also try plot:

>>> brown_fd.plot(cumulative=True)

According to the result, we can say top 10 occupies more than 90%.

Noun(5.2.4):

>>> word_tag_paris = nltk.bigrams(brown_news_tagged) >>> list(nltk.FreqDist(a[1] for (a, b) in word_tag_paris if b[1] == 'N')) ['DET', 'ADJ', 'N', 'P', 'NP', 'NUM', 'PRO', 'CNJ', '.', ',', 'VG', 'VN', 'V', 'VD', 'ADV', '``', 'WH', 'VBZ', "''", '(', '', ')', ':', 'FW', "'", '*', 'MOD', 'TO']

This one to check what kind of tags come in front of noun ('N'). The most frequent one is DET like "a", "the", the next one is adjective ('ADJ') and so on.

How about verb ('V')? (5.2.5):

What verbs are most frequently used in the news corpus?

>>> wsj = nltk.corpus.treebank.tagged_words(simplify_tags=True) >>> word_tag_fd = nltk.FreqDist(wsj) >>> [word + "/" + tag for (word, tag) in word_tag_fd if tag.startswith('V')] ['is/V', 'said/VD', 'are/V', 'was/VD', 'be/V', 'has/V', 'have/V', 'says/V', ....

Besides be-verb, this is I expected most frequent one, we can see "said" or "says". Imagine like 'Prime minister Mr.xxxxx said...', we can hear this kind of statement in the news very often.

This one to check which tags are assigned to a specific word.

>>> cfd1 = nltk.ConditionalFreqDist(wsj) >>> cfd1['yield'].keys() ['V', 'N'] >>> cfd1['cut'].keys() ['V', 'VD', 'N', 'VN']

Which past participle('VN') words are most frequently used?

>>> cfd2 = nltk.ConditionalFreqDist((tag, word) for (word, tag) in wsj) >>> cfd2['VN'].keys() ['been', 'expected', 'made', 'compared', 'based', 'priced', 'used', 'sold', ....

>>> [w for w in cfd1.conditions() if 'VD' in cfd1[w] and 'VN' in cfd1[w]] ['Asked', 'accelerated', 'accepted', 'accused', 'acquired', 'added', 'adopted', 'advanced', 'advised', ....

Pick up one of the words to check how those words are used as 'VD' or 'VN'.

>>> idx1 = wsj.index(('kicked', 'VD')) >>> wsj[idx1-4:idx1+1] [('While', 'P'), ('program', 'N'), ('trades', 'N'), ('swiftly', 'ADV'), ('kicked', 'VD')] >>> idx2 = wsj.index(('kicked', 'VN')) >>> wsj[idx2-4:idx2+1] [('head', 'N'), ('of', 'P'), ('state', 'N'), ('has', 'V'), ('kicked', 'VN')]

Last topic in the chapter.

>>> for word in cfd2['VN'].keys(): ... idx3 = wsj.index((word, 'VN')) ... print wsj[idx3-2:idx3+1], ... [('He', 'PRO'), ('had', 'VD'), ('been', 'VN')] [('times', 'N'), ('the', 'DET'), ('expected', 'VN')] ....

This was very time consuming. Maybe the logic is not cool enough...